Developing hardware-driven puppeteering interfaces

to explore Human-Robot Interaction as guided improvisations in real-world contexts

The familiar picture of multi-purpose humanoid robots we see in science fiction is oftentimes an inefficient solution to almost any practical task. We therefore will undoubtedly see an increase in robots as consumer products that are likely to be relatively simple and specialised devices with limited sensory capabilities and autonomy, along the lines of a Roomba vacuum cleaner. Such robotic products may appear to blend machine, creature or human characteristics in their overall form and expressivity. It is therefore interesting and relevant to study HRI in the context of such simple behaviour-based robotic products.

The interactions people have with such robotic products in everyday life cannot be completely scripted or controlled but are considered to emerge in situated encounters between human and robot. The question this graduation project tackles is how the interplay between human and robot can be designed as guided improvisations. In other words, how the design of a robot’s appearance and expressive behaviour helps steer the interaction towards desired outcomes yet allowing for a meaningful level of spontaneousness.

Hardware-driven puppeteering

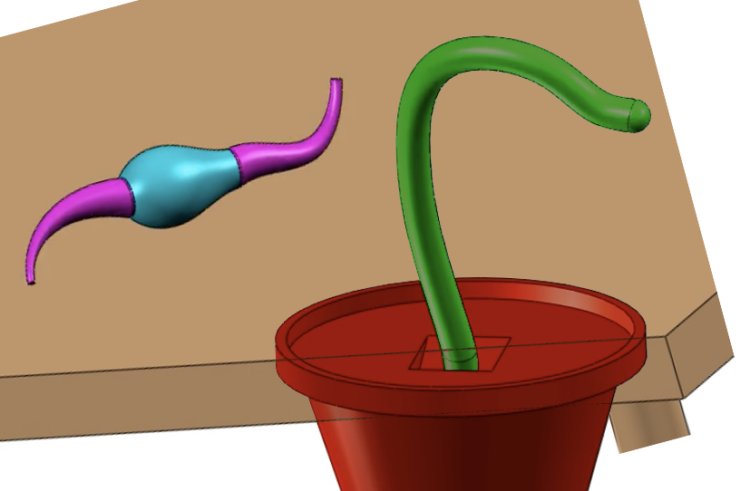

Puppeteering robots is a valuable approach to study human-robot interactions because it allows the designer to gain insight into the interaction dynamics without having to first program a robot’s autonomous behaviour. By puppeteering a robot through a remote sensorimotor interface, the designer can perceive the world from its sensory capabilities (i.e., look through the robot’s eyes) and perform its behaviour through its motor capabilities. Based on the experiences gained thought these enactments, the human puppeteer can then translate discovered interaction strategies into machine learning algorithms.

StudyBuddy is introduced as a design context for researching emerging interactions through hardware-driven puppeteering interfaces. Due to the pandemic, domestic spaces have become offices and turned kitchen tables into sites for eating, socializing, and work.

StudyBuddy is a curious and playful little robot that inhabits your kitchen table and encourages you to balance your work and social life at home. It helps you to focus when you feel distracted, helps you take a break from work when tired, and cheers you up when you’re feeling down.

Who are we looking for?

We are looking for motivated students from either the IPD or DfI tracks with skill and interest in interaction design research and functional prototyping in the context of human-robot interaction and smart products. In this graduation project you will be asked to:

-

- Investigate how robotic products afford emerging interactions by investigating their expressiveness and interactivity within particular use contexts.

- Create a prototype that allows you to puppeteer it through a remote sensorimotor interface on a kitchen table in a controlled lab setting.

- Determine the minimal sensory capabilities and motor outputs required to achieve a meaningful level of robotic perception and expressiveness.

- Implement and experimentally evaluate candidate solutions for autonomous control.

Repeat steps 1 to 4 as necessary to refine the solution and move towards an effective, autonomous StudyBuddy prototype. Throughout this process, document and analyse your findings to better understand emergent behaviour, performativity and expressiveness in interactions between humans and simple robotic products.

Specific questions to be addressed include…

- What sorts of emergent behaviour can arise in the use of robotic products, and how (if at all) can we design HRI as guided improvisations?

- What is the significance of aesthetics in cultivating the effective interaction between humans and robotic products?

- Can a simple reactive control strategy, akin to the behaviour-based approach first conceived by Rodney Brooks, support meaningful expression and HRI?

Please contact Marco Rozendaal or Jordan Boyle for further inquiries.